MediaPipe is an open-source machine learning framework presented by Bazarevsky et al. at CVPR 2019.

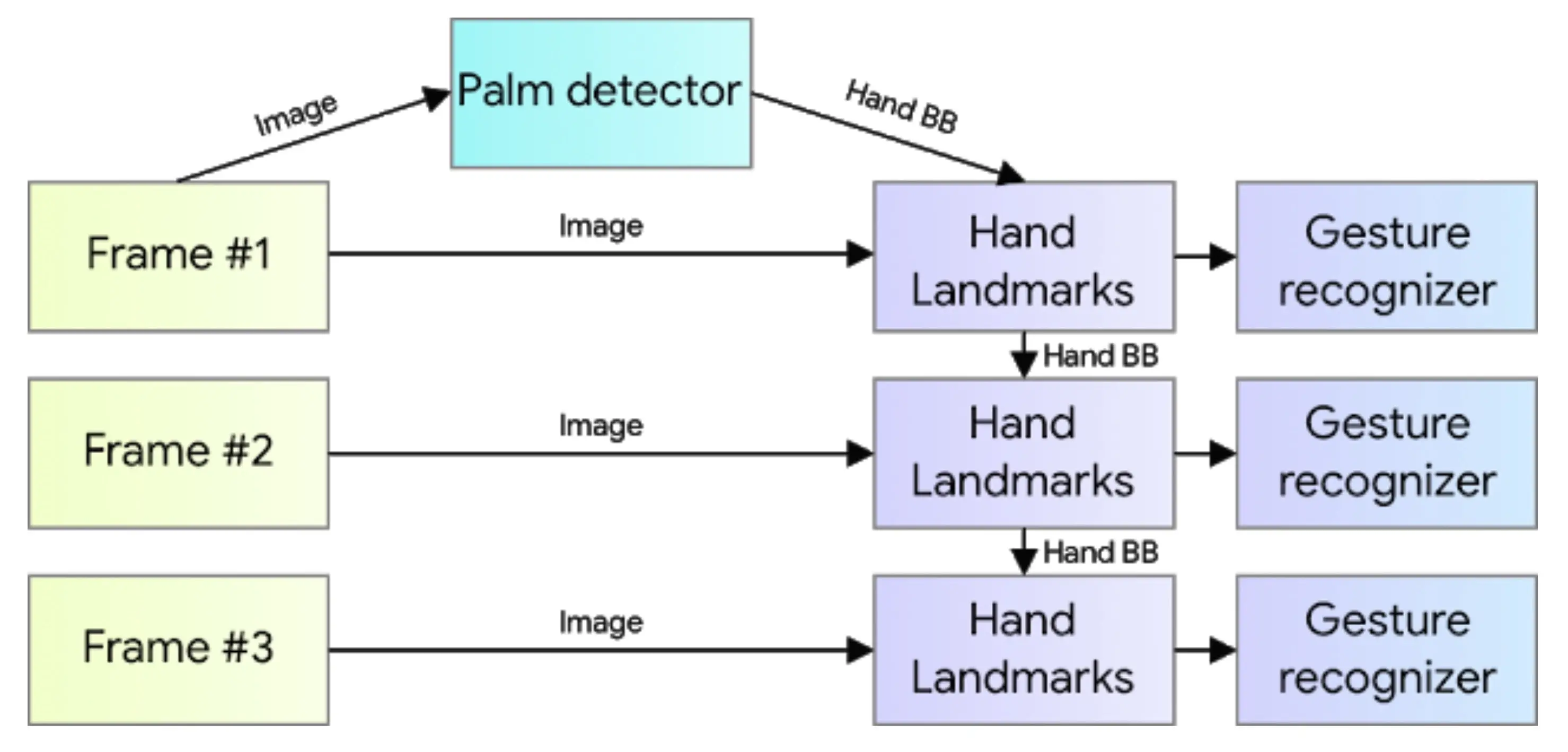

https://github.com/google/mediapipeThe hand tracking feature used there combines a single-shot palm detection algorithm and a hand landmark model (Google AI Blog: On-Device, Real-Time Hand Tracking with MediaPipe).

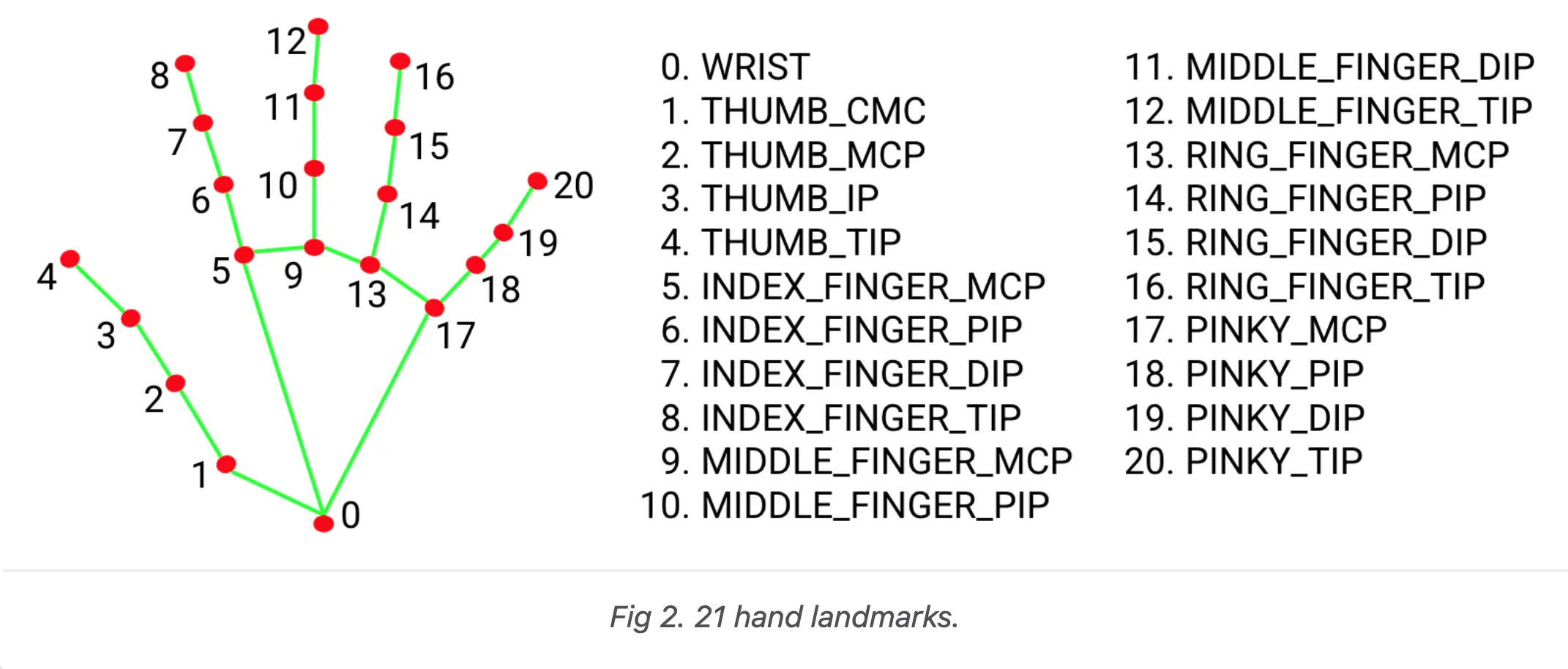

The shape of the hand can be obtained as the coordinates of the following landmarks. Inference runs every frame, and values in cv coordinates can be obtained.

https://google.github.io/mediapipe/solutions/hands

The returned landmark object allows you to extract the float coordinates as follows:

1for hand_idx, landmarks in enumerate(multi_hand_landmarks): 2 for point_idx, points in enumerate(landmarks.landmark): 3 print(f"Hand: {hand_idx}, {HAND_LANDMARK_NAMES[point_idx]}," 4 + f"x:{points.x} y:{points.y} z:{points.z}")

At this time, HAND_LANDMARK_NAMES is in the following order:

1HAND_LANDMARK_NAMES = [ 2 "wrist", 3 "thumb_1", 4 "thumb_2", 5 "thumb_3", 6 "thumb_4", 7 "index_1", 8 "index_2", 9 "index_3", 10 "index_4", 11 "middle_1", 12 "middle_2", 13 "middle_3", 14 "middle_4", 15 "ring_1", 16 "ring_2", 17 "ring_3", 18 "ring_4", 19 "pinky_1", 20 "pinky_2", 21 "pinky_3", 22 "pinky_4" 23]

This time, we will prototype data transmission via Open Sound Control for interactive artworks or gesture recognition using Wekinator, etc., using this real-time hand tracking by MediaPipe.

Script

1# Atsuya Kobayashi 2020-12-22 2# Reference: https://google.github.io/mediapipe/solutions/hands 3# LICENCE: MIT 4 5from itertools import chain 6 7import mediapipe as mp 8from cv2 import cv2 9from pythonosc import udp_client 10 11IP = "127.0.0.1" 12PORT = 7474 13VIDEO_DEVICE_ID = 0 14RELATIVE_AXIS_MODE = True 15 16HAND_LANDMARK_NAMES = [ 17 "wrist", 18 "thumb_1", 19 "thumb_2", 20 "thumb_3", 21 "thumb_4", 22 "index_1", 23 "index_2", 24 "index_3", 25 "index_4", 26 "middle_1", 27 "middle_2", 28 "middle_3", 29 "middle_4", 30 "ring_1", 31 "ring_2", 32 "ring_3", 33 "ring_4", 34 "pinky_1", 35 "pinky_2", 36 "pinky_3", 37 "pinky_4" 38] 39 40 41def extract_detected_hands_points(multi_hand_landmarks, 42 send_osc_client=None): 43 44 if multi_hand_landmarks is not None: 45 for hand_idx, landmarks in enumerate(multi_hand_landmarks): 46 for point_idx, points in enumerate(landmarks.landmark): 47 48 # if you want to check data on console 49 print(f"Hand: {hand_idx}, {HAND_LANDMARK_NAMES[point_idx]}," 50 + f"x:{points.x} y:{points.y} z:{points.z}") 51 """ 52 if you want to send data to addresses correspoding 53 to landmarks names on detected hands, use berow 54 """ 55 # if send_osc_client is not None: 56 # send_osc_client.send_message(f"/{HAND_LANDMARK_NAMES[point_idx]}", 57 # [points.x, points.y]) 58 59 """if you want to send data to single input address, use berow""" 60 if send_osc_client is not None: 61 send_osc_client.send_message( 62 f"/YOUR_OSC_ADDRESS", 63 list(chain.from_iterable([[p.x, p.y] for p in landmarks.landmark]))) 64 65 66if __name__ == "__main__": 67 68 mp_drawing = mp.solutions.drawing_utils 69 mp_hands = mp.solutions.hands 70 71 hands = mp_hands.Hands( 72 min_detection_confidence=0.5, min_tracking_confidence=0.5) 73 74 cap = cv2.VideoCapture(VIDEO_DEVICE_ID) 75 76 osc_client = udp_client.SimpleUDPClient(IP, PORT) 77 78 while cap.isOpened(): 79 success, image = cap.read() 80 if not success: 81 print("Ignoring empty camera frame.") 82 # If loading a video, use 'break' instead of 'continue'. 83 continue 84 85 image = cv2.cvtColor(cv2.flip(image, 1), cv2.COLOR_BGR2RGB) 86 image.flags.writeable = False 87 results = hands.process(image) 88 extract_detected_hands_points(results.multi_hand_landmarks, 89 send_osc_client=osc_client) 90 image.flags.writeable = True 91 image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR) 92 if results.multi_hand_landmarks: 93 for hand_landmarks in results.multi_hand_landmarks: 94 mp_drawing.draw_landmarks( 95 image, hand_landmarks, mp_hands.HAND_CONNECTIONS) 96 cv2.imshow('Detected Hands', image) 97 98 if cv2.waitKey(5) & 0xFF == 27: 99 break 100 101 hands.close() 102 cap.release()