Improvise+=Chain [Interactive]: System for Real-Time Performance with GPT

Building on the generative system of the autonomous musical audiovisual installation Improvise+=Chain, we have developed Improvise+=Chain [Interactive], a real-time ensemble system that enables collaborative improvised jam sessions involving human musicians and computational AI.

SystemOverview

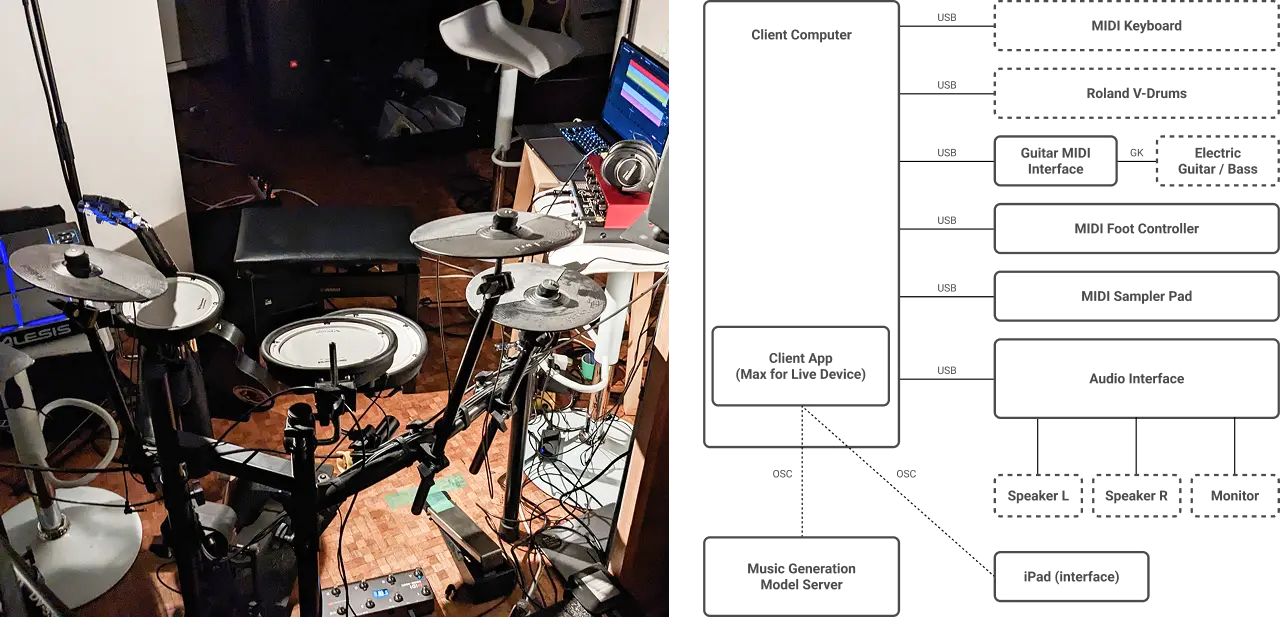

By utilizing multi-track symbolic music generation driven by an autoregressive Transformer Decoder, it enables human musicians and a computer to have an improvised jam session. The system responds to the user's playing phrases as input, and together they shape the music dynamically.

Users can selectively play any of the 4 tracks used in the performance (Melody, Accompaniment, Bass, Drums) by inputting their performance via MIDI.

- 4 Instruments Available to the User (Electronic piano with MIDI out, Electric guitar/bass equipped with pickups, Electronic drums)

- Melody and accompaniment input via any MIDI-equipped keyboard

- Drum input via the Roland V-Drums module

- Guitar and Bass melody/accompaniment and bass input using Roland GK-3 / GK-3B pickups paired with a GI-20 MIDI Interface

- Client PC (Runs Ableton Live for playback of performance and generated sequences)

- Generation Server (Executes sequence generation via the AI model)

The sound output from the client PC is played through speakers and monitored via headphones. To allow comfortable control during the performance, multiple MIDI devices are connected to the client PC, allowing the user to operate the user interface via pads or foot pedals.

ExperienceDesign

UseCases

As a basic mode of experience, two main patterns are prepared:

- User-Led Pattern: First, the user plays alone for the initial 8 bars. After 8 bars from the performance start trigger, a generation trigger is sent to the AI, and the other tracks are generated in a way that aligns with the human's intentions.

- AI-Led Pattern: The AI generates the initial 3 parts without conditioning, after which the user's performance comes in.

Unlike the installation version, this system eliminates the visualization of attention and visual expressions, consisting purely of interactions centered on musical elements.

GenerationControl

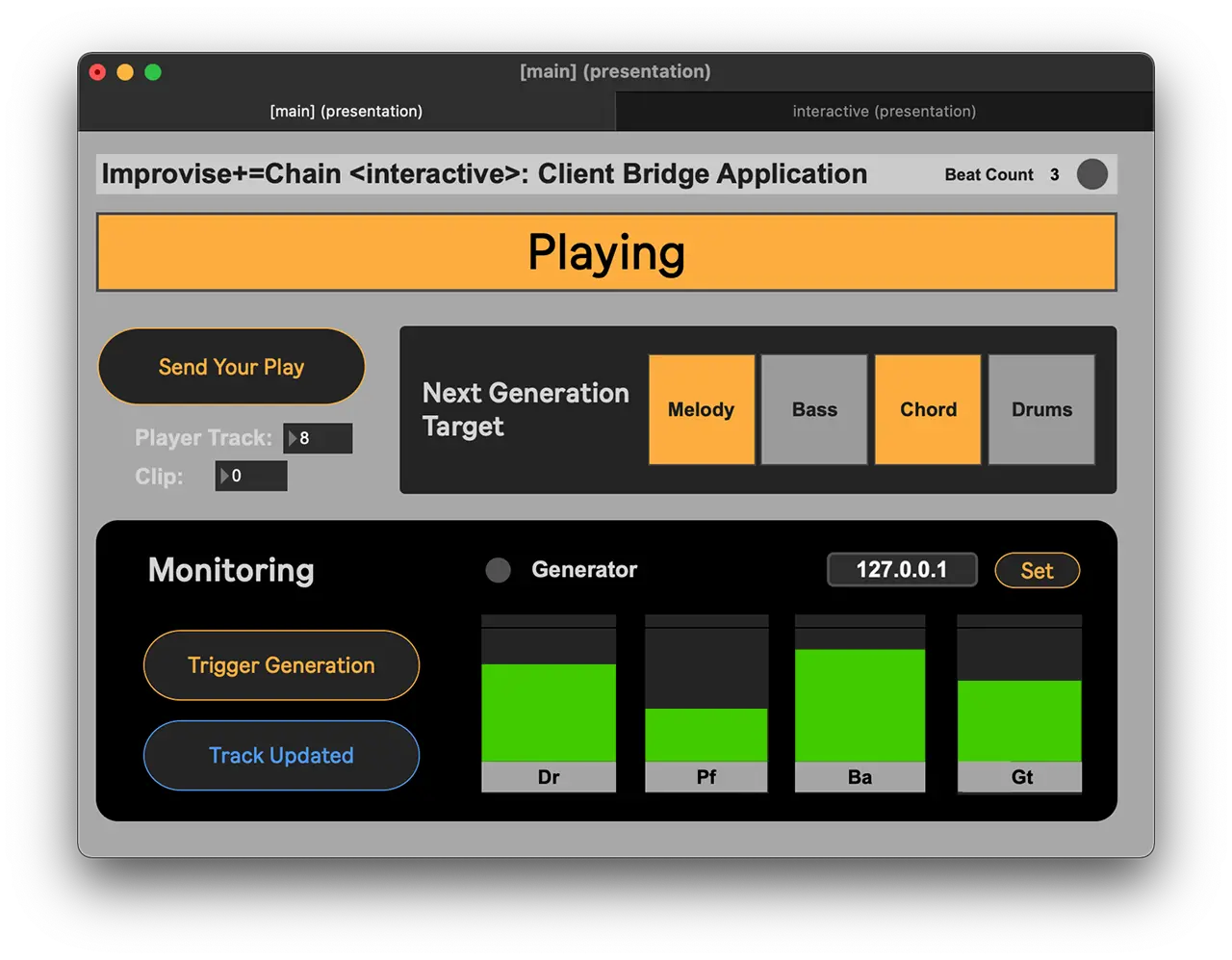

Model generation is triggered every 8 bars, similar to the installation piece. The user's performance is constantly recorded, sent to the generation server every 8 bars, and then the other tracks are generated to match the user's performance.

On the other hand, the target tracks for regeneration are not selected randomly but explicitly chosen by the user. Through the operation of the provided user interface application, the user switches the instrument to be generated next. This interface allows hands-free operation even during a performance by assigning MIDI Control Change messages to iPads, MIDI foot controllers, or drum sampling pads, depending on the instrument being played.

TempoandGenerationParameterControl

The tempo and the parameter during generation (Temperature: $$T \in [0.9, 1.5)$$) can be controlled arbitrarily by the user.

Depending on the performance, four controls ("Tempo Up", "Tempo Down", "Temperature Up", "Temperature Down") assigned to an iPad, MIDI foot switch for guitar/keyboard, or sampling pads for drums can be manipulated in real time in precise increments of ±5 and ±0.1, respectively.

ConclusionandFutureDirections

Improvise+=Chain [Interactive] is a system example that applies an autoregressive music generation model, which served as the backend, to perform a session between a human musician (user) and a computer. Utilizing the characteristics of the multi-track music generation model, this system makes it possible to conditionally generate performances for instruments that the user is not actively playing in real time.

If tighter control over variable tempo, time signatures, and chord progressions can be achieved, we believe it can be utilized for richer expression and as an idea support tool for composition.

For idea support towards composition, it is necessary to design interactions that allow for mechanisms to save ideas and choices between adopting one's own performance versus the computer's performance naturally while playing. Furthermore, a mechanism to explicitly control from a broader perspective than phrases, such as chord progressions and keys, is also required.

To enable such interactions with the AI model while performing, a meticulously designed user interface will be indispensable, complete with boldness that is not bound by existing on-screen operations or simple foot pedal controls.